While that should cover the majority of wget use cases, the downloader is capable of much more. -no-verbose turns off log messages but displays error messages.-v explicitly enables wget’s default of verbose output.-q turns off all of wget’s output, including error messages.-o path/to/log.txt enables logging output to the specified directory instead of displaying the log-in standard output.-t 10 will try to download the resource up to 10 times before failing.-c/ -continue will continue downloads of partially downloaded files.-nc/ -no-clobber will not overwrite files that already exist in the destination.The input file must be an HTML file or be parsed as HTML with the additional flag -force-html -i file specifies target URLs from an input file.The * character can be used as a wildcard, like “*.png,” which would skip all files with the PNG extension. In this case it will exclude all the index files. -R index.html/ -reject index.html will skip any files matching the specified file name.For example, -nH -cut-dirs=1 would change the specified path of “/pub/xemacs/” into simply “/xemacs/,” reducing the number of empty parent directories in the local download. -cut-dirs=# skips the specified number of directories down the URL before starting to download files.Remember, the hostname is the part of the URL that contains the domain name and ends in a TLD like “.com.” For example, the folder named “in our previous example would be skipped, starting the download with the “History” directory instead. -X /absolute/path/to/directory will exclude a specific directory on the remote server.In addition to the flags above, this selected handful of wget’s flags are the most useful: Controlling the download In general, it’s a good idea to disable robots.txt to prevent abridged downloads. That command also includes -e robots=off, which ignores restrictions in the robots.txt file. This will cause wget to follow any links found on the documents within the specified directory, recursively downloading the entire specified URL path. To download an entire directory tree with wget, you need to use the -r/–recursive and -np/–no-parent flags, like so: wget -e robots=off -r -np If the -O flag is excluded, the specified URL will be downloaded to the present working directory. That will save the file specified in the URL to the location specified on your machine. The command’s structure works like so: wget -O path/to/py

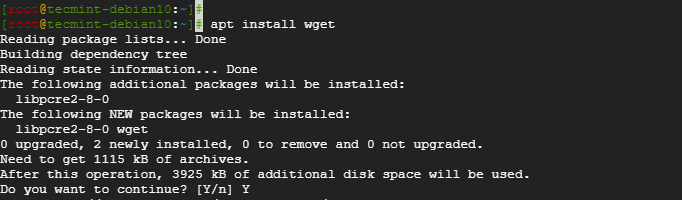

Like the similar command curl, wget takes a remote resource from a URL and saves it to a specified location on your computer. It’s a quick and simple non-interactive tool for downloading files from any publicly accessible URL. The purpose of wget is downloading content from URLs. If you already have Homebrew installed, be sure to run brew update to get the latest copies of all your formulae. You’ll get live updates on the progress of downloading and installing whatever dependencies (software prerequisites) are required to run wget on your system. In Terminal, run the following command to download and install wget: Once it has completed installing itself, we will use Homebrew to install wget. You might notice the command called curl, which is a different command-line utility for downloading files from a URL that ships within the Ruby installation included on macOS. To install Homebrew, open a Terminal window and execute the following command taken from Homebrew’s website: /usr/bin/ruby -e "$(curl -fsSL )" While wget isn’t shipped with macOS, it can be easily downloaded and installed with Homebrew, the best Mac package manager available.

The program was designed especially for poor connections, making it especially robust in otherwise flaky conditions. Because it is non-interactive, wget can work in the background or before the user even logs in. Wget is a non-interactive command-line utility for download resources from a specified URL.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed